Exploring a New Dataset With Python: Part I

We’re taking it back to the basics in this article.

Why?

The day of a Digital Marketer is busy. We’re pulled in all sorts of different directions and are responsible for a lot of different things. In my personal experience, it’s easy to get caught up in non-stop execution and forget that taking the time to sit with, think about, and explore a topic is also a necessary part of many things we do.

In this case, we’re talking about new datasets. As Digital Marketers, we’re often given datasets that another team and/or company has been collecting, sometimes for years, that is brand new to us. Usually they’re turning to us for a better way to manage the data or to transform it into something more user-friendly, like an interactive dashboard.

When you first open up the spreadsheet and see all of the foreign columns and rows and acronyms that you didn’t create, the feelings of jumping in and getting started might start to build. There’s a lot of charts to be made and they won’t make themselves!

BUT, this is when it’s time to remember everything you’ve learned from your meditation app - take a breath and remember that this whole process will be easier if you set aside time to explore the dataset, familiarize yourself with it, understand what is being tracked and what will be important for your audience to learn from it.

There’s a lot to unpack in a new dataset, so I’m splitting the exploration process into two parts:

- The Basics

- Visualizations

This article will go in-depth on Part I: The Basics. Part II: Visualizations will be covered in a future article.

We’ll be using Python to complete both parts. It’s a great tool to go through the data exploration process with - you’ll get quick stats and breakdowns on the data, and can easily put visualizations together to identify trends and outliers all in one notebook.

The steps in this article will help you understand:

- What information is being collected

- How the dataset is set up

- How much information is in the dataset

- If there are any null values

- Basic statistics on each column

- How to prompt additional questions about the dataset that you can answer for further understanding

I’ll be taking notes along the way with my findings for each section related to the dataset I’m analyzing. I’ll be using a fake dataset that I whipped up on product sales for an animal mug company that I also made up. I’ve been drinking a lot of coffee, alright?

Let’s dive in!

Import libraries and your data file

All of the Python libraries shown here aren’t necessary to cover The Basics, but it will set you up to complete the visualizations later on as well. If you only want to import what’s necessary for this part, you only need pandas and numpy.

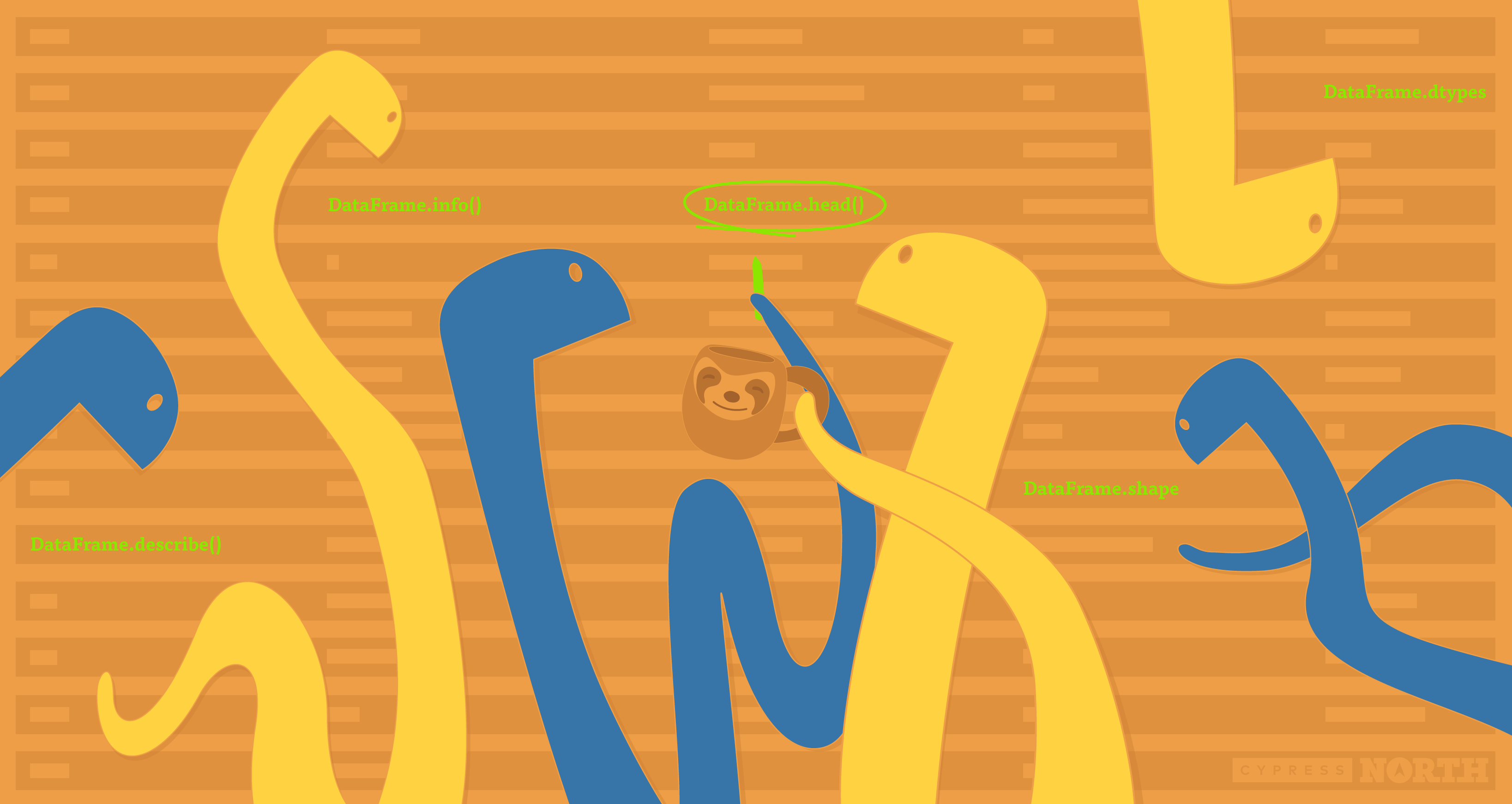

DataFrame.head()

Once my data is imported into a Jupyter Notebook, the first thing I always like to do is to look at the head() to see what columns the dataset contains.

This helps me get familiar with what the dataset is about and what is being tracked. If I were seeing this dataset for the first time, I could see that it’s sales data by day for different mug products as well as the amount spent on advertising for that day.

Notes:

Looking at this, I already have a few questions.

- What dates does the data begin and end on?

- Are there multiple rows for each date, i.e. a row for each product per day? The dates are out of order here so it’s hard to tell.

- How many unique products are there?

These questions will drive some additional exploration to familiarize ourselves with the data.

Before we get to that, I’m going to keep running through the initial exploration steps and keep track of any other questions or thoughts I come up with.

DataFrame.dtypes

This will tell you the datatype of each column. These are important to be aware of, especially if you’re going to be performing aggregations, creating visualizations, or really doing any other manipulation to the dataset.

Many of these things won’t work properly if the data is not coming in as the proper type (a number coming in as an object, for example). Take note of anything that doesn’t look right so you can convert it to the proper data type.

Notes:

- Columns that contain numbers are coming in as numerical data types - all good there.

- My product column that contains text values is coming in as an object. Depending on what type of work I plan on doing with this dataset, I may want to change it to a string.

- There is no dedicated date type for dates, so object is fine. There is a datetime module that can be imported to work with dates (and times) - we’ll make this conversion later in the article.

DataFrame.shape

Short and sweet, this returns the number of rows and columns in the dataset to give you an idea of how large it is.

Notes:

- My dataset has 284 rows and 5 columns

DataFrame.info()

This can be used as an alternative to df.dtypes and df.shape. It will give you that information plus the memory usage of the DataFrame and how many non-null values exist in each column, which can be useful.

If my TotalUnits column reported 280 non-null values, I would know that there are 4 null values in that column that I’d need to handle.

DataFrame.describe()

I saved the best for last in our data exploration basics. Here we’ll get some summary statistics on our numerical columns. I’m adding round(2) to round the values to the nearest hundredth, but it’s not necessary to include, or you can change the decimal place it rounds to to your liking.

This gives a nice overview of the data in these columns: how many values there are (count), smallest (min) and largest (max) values, quartiles (25%, 50%, 75%), mean, and standard deviation.

If you have columns with object data types, you can still use df.describe(), you’ll just need to separate them out from the numerical columns - DataFrames with mixed data types will default to only returning the numerical summary.

Bonus Round! Q&A

Our initial exploratory steps are complete to get a lay of the new dataset land. Now, I’m going to go back and answer the lingering questions I came up with along the way.

What dates does the data begin and end on?

Here is where the datetime module will come in handy. I’m going to import that first and it will make some new, helpful options available to answer questions like these.

Then, I’m going to convert the Date column into a datetime data type.

If I check the dtypes again, you can see that my Date column is now converted:

I’ll now be able to check the start and end dates on the dataset. I can see that it starts on January 1, 2020 and ends on April 6, 200

Are there multiple rows for each date, i.e. a row for each product per day?

My main issue here is that the dates aren’t ordered. There is more than one way to get the answer to this, but all I’m going to do is order the dates to see what the first few rows look like.

To sort by the date column, you’ll need to use the datetime module to convert your Dates to a datetime datatype. So if you skipped the last question, go back and see how it’s done!

Looking at the first 10 rows, it does indeed appear that there is a row for each product’s sales and advertising cost per date.

How many unique products are there?

All I have to do for this one is use nunique() to get the number of unique values in my products column. I can see that the dataset is reporting on 8 different products.

Continue on to Part II

We’ve completed The Basics and we’ve already learned a lot about our new dataset! Check out Part II where we continue the data exploration process with Python to create some visualizations and understand more about our data.

![Data - Blog - Google Collab [Background]](https://cypressnorth.com/wp-content/uploads/2024/03/Data-Blog-Google-Collab-Background-640x360.jpg)